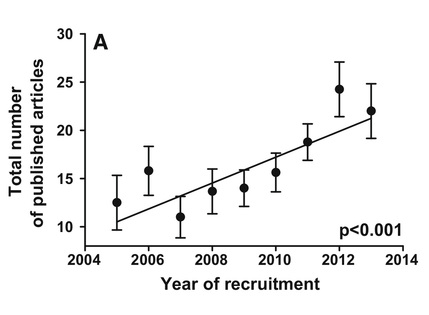

Figure from Brischoux and Angelier, showing the number of publications of successful CNRS applicants, from 2005 to 2013. There is a strong, steady increase in what is required of a successful candidate, with applicants now expected to have 22 papers from 8 years in academia, compared to just 12 papers from 3 years in 2005.

Figure from Brischoux and Angelier, showing the number of publications of successful CNRS applicants, from 2005 to 2013. There is a strong, steady increase in what is required of a successful candidate, with applicants now expected to have 22 papers from 8 years in academia, compared to just 12 papers from 3 years in 2005. We often feel that the bar is growing higher and higher in evolutionary biology. As young researchers hoping to establish successful academic careers, we have the uneasy feeling that we must publish more papers, in better journals (whatever that means) and get more citations, and boost our h-index. Now, Brischoux and Angelier's 2015 paper has provided some concrete evidence that our 'uneasy feeling' is worryingly accurate.

The authors examined the profiles of researchers who had been granted CNRS 'junior researchers' positions between 2005 and 2013. They found that during that short time, the number of papers needed for success had nearly doubled, from around 12 to over 20. Equally, career duration and papers published per year saw strong rises.

So what is driving this? Isn't it a good thing, that young researchers are being more productive? The authors argue that the 'publish or perish' dogma means that young researchers remain in precarious contracts for longer. Worryingly, this may lead to some strong scientists leaving academia before they become established. Even more worryingly, their findings reveal that the impact factor of the published research is not rising, suggesting young scientists are not taking risks or being creative, and the 'quality' of science is not increasing. Instead, early career researchers may be 'salami-slicing' (ie dividing findings into the minimum publishable unit - MPU or publon) with the specific goal of getting more papers from a dataset, rather than publishing creative or elegant findings.

There are other explanations. Perhaps evolutionary biology is seeing more and more papers with long author lists, and young researchers in the last few years have profited from this. CNRS recruitment would then merely reflect this trend by raising their standards for acceptance. It would be interesting to investigate only first-author papers.

The dataset would also offer a fantastic opportunity to relate quantity with 'quality' of publications, yet the authors haven't examined this. Are those publishing most frequently producing lower-impact papers (fewer citations, lower impact-factor journals etc)? Are there two distinct strategies: 'trigger-happy' publishers producing many lower-impact papers, and other researchers producing fewer papers, but in higher impact-factor journals? Which strategy is more likely to lead to CNRS glory?

The paper also drew my attention to Scientometrics, a journal dedicated to statistical assessments of the quality, development and mechanisms of science . What could be better? It is full of interesting papers!

The authors examined the profiles of researchers who had been granted CNRS 'junior researchers' positions between 2005 and 2013. They found that during that short time, the number of papers needed for success had nearly doubled, from around 12 to over 20. Equally, career duration and papers published per year saw strong rises.

So what is driving this? Isn't it a good thing, that young researchers are being more productive? The authors argue that the 'publish or perish' dogma means that young researchers remain in precarious contracts for longer. Worryingly, this may lead to some strong scientists leaving academia before they become established. Even more worryingly, their findings reveal that the impact factor of the published research is not rising, suggesting young scientists are not taking risks or being creative, and the 'quality' of science is not increasing. Instead, early career researchers may be 'salami-slicing' (ie dividing findings into the minimum publishable unit - MPU or publon) with the specific goal of getting more papers from a dataset, rather than publishing creative or elegant findings.

There are other explanations. Perhaps evolutionary biology is seeing more and more papers with long author lists, and young researchers in the last few years have profited from this. CNRS recruitment would then merely reflect this trend by raising their standards for acceptance. It would be interesting to investigate only first-author papers.

The dataset would also offer a fantastic opportunity to relate quantity with 'quality' of publications, yet the authors haven't examined this. Are those publishing most frequently producing lower-impact papers (fewer citations, lower impact-factor journals etc)? Are there two distinct strategies: 'trigger-happy' publishers producing many lower-impact papers, and other researchers producing fewer papers, but in higher impact-factor journals? Which strategy is more likely to lead to CNRS glory?

The paper also drew my attention to Scientometrics, a journal dedicated to statistical assessments of the quality, development and mechanisms of science . What could be better? It is full of interesting papers!